The Skills PMs Need to Build AI-Driven Products

AI is reshaping the way SaaS products are built, but there is a lot of work between today and a world of functional AI-driven products. To get there, PMs need the foresight to know when AI is actually valuable versus when it’s being used for show. Beyond that, they need to hone the specific skill set required to build and lead them effectively. So what makes PMing AI products different, and can these skills help you land your next AI-driven role?

Join us on Tuesday, October 29, 2024, at 10:30am PT / 1:30pm ET for an exclusive live webinar featuring top AI and PM experts. Whether you’re currently managing AI products or looking to break into the field, this session will help you identify real AI opportunities, avoid common pitfalls, and position yourself for high-demand AI roles.

Meet Our Panelists:

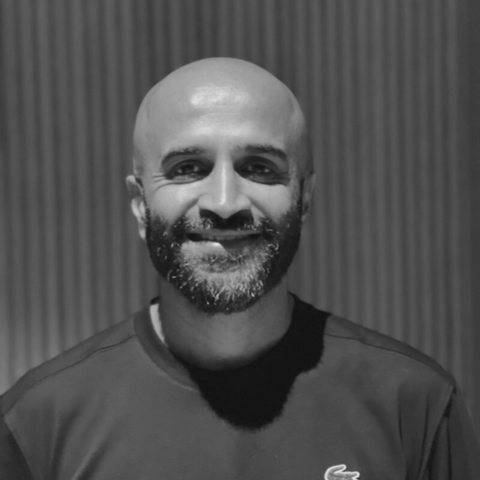

- Praveen Gujar – Director of Product at LinkedIn, he brings over 19 years of experience working with tech giants including Amazon, Twitter, and Huawei.

- Dr. Nancy Li – A PM coach specializing in helping professionals land top roles, she is an award-winning Director of Product, YouTuber, and the host of Product Insider Podcast. Dr. Nancy has been featured in Forbes and as a LinkedIn Top Voice.

- Andrea Dulko – Andrea joined Google Cloud team and quickly established herself as an expert in Conversation AI. She led a team of designers and researchers in launching new contact center tools including Dialogflow CX, Agent Assist, and CCAI Insights.

In this session, you’ll learn:

- How to distinguish between real AI opportunities and hype

- What makes product managing AI-driven products different from traditional software development

- Key skills and strategies to excel in AI product management

- How to translate your AI product experience into a strong resume and land a high-paying AI role

We’ll also set aside time for a live Q&A session, where you can get your burning questions answered directly by our panel of experts. Don’t miss this opportunity to learn from leaders who have successfully built and launched AI products!

In this session, you’ll hear about the AI PM framework Dr. Nancy Li and her team invented to create 8 AI products in 2 months with a team of AI engineers. If you’d like to learn more about her workshop check out, How to Become an AI Product Manager and Fast Track Your Career.

Additionally, Dr. Nancy has also previously shared this video that talks about the key AI concepts that all product managers must master.

[00:00:00] Hannah Clark: Whoever is here so far. Um, so if you’re not familiar with me, my name is Hannah Clark. I’m the editor of the product manager. Um, and I just want to welcome everybody to our community event series. So, so far we’ve had just an amazing time putting on these events for folks. We’ve gotten great feedback on them.

Um, we’ll be, uh, Handing out a feedback form at the end of this session, so we can make them even better for next time. In the meantime, today’s session will focus on the skills that PMs need to build AI driven products. And we’ll be speaking with some amazing thought leaders in the space today. I’m really excited to introduce them to you.

So first up, we’ve got Praveen Kujar, who’s the Director of Product at LinkedIn. So Praveen brings over 19 years of experience working with tech giants, including Amazon, Twitter, and Huawei. And we’re going to be asking everybody a little question to get us warmed up here. Um, so Praveen, [00:01:00] what’s the silliest use case or biggest don’t when it comes to working with AI in product?

[00:01:05] Praveen Gujar: Hi, Hannah. Uh, thanks for having me here. And, uh, hi to everyone who’s on the call. Um, yeah, I think the biggest, uh, do not, uh, do is build novelty products. Uh, uh, what do I mean by that? Is basically using AI for the sake of a, uh, ai, uh, and building a product that may not be solving a real, uh, life problem. Uh, I think that would be my topic, uh, for what not to do.

That’s a

[00:01:29] Hannah Clark: great answer. Um, next we’ve got Dr. Nancy Lee, who’s a PM coach specializing in helping professionals land top roles. She is an award winning director of product, a YouTuber, a host of Product Insider podcast, and, uh, also the founder of the PM Accelerator. Uh, and Nancy has also been featured in Forbes and is a long, uh, LinkedIn top voice.

So, uh, Dr. Nancy, I’m going to pass it also to you. Uh, what is the silliest use case or biggest don’t, uh, as far as you see it when it comes to working with AI and product?

[00:01:57] Dr. Nancy Li: Thank you for having me, Hannah, and I see [00:02:00] lots about most CV case use case is vertical farming using AI and is one of the most well known and also bigger failure in the application of AI.

So that leading to why it felt and big stones. So big stones in this case is. Do not forget about the return on investment of AI and also the cost implementing AI. And think about what the end users really want and not just adding AI for the purpose of adding AI and leading to lots of heavy costs. In this case, it’s vertical farming.

AI doesn’t work.

[00:02:35] Hannah Clark: Very much agree with that answer as well. And next up, we’ve got Andrea Dilko. Andrea has joined Google Cloud team and quickly established herself as an expert in conversation AI. She led a team of designers and researchers in launching new contact center tools, including Dialogflow CX, Agent Assist, and CCAI Insights.

Um, Andrea, we’ll throw it to you. What’s the silliest use case or biggest don’t when it comes to using AI and product from your perspective? So [00:03:00]

[00:03:00] Andrea Dulko: the biggest don’t I would say is, is saying something that is, is AI when it really isn’t. I think maybe a few years ago you could get away with that, but now people are getting a little more savvy.

Um, and silliest, I’m just. I’m saying this one because I am visiting somewhere right now where they have a lot of these delivery robots. And I don’t think that the use case is silly. I think it’s a very good use case, but they just look very silly and very cute. So I just want to take, take them home with me.

Um, so, so yeah, that’s, that’s my silly use case.

[00:03:35] Hannah Clark: Yeah, I have to agree. There’s a really fancy spa in my city that has implemented these and it’s just like it’s such a upscale area. And then there’s these silly robot faces that just really don’t fit the vibe. So I really agree with you there. All right, so we’re going to get started with some housekeeping right away.

But for the folks who are tuning into the event today, We’re just curious to get you guys warmed up. Um, what, uh, was the reason that you signed up for [00:04:00] today’s, uh, event? So if you want to just, uh, throw into the chat, uh, a little about yourself, maybe your job title, and why you’re curious about, uh, this topic today, we’d love to hear from you.

It really helps us out, uh, as we are planning. Future events as well. That all are to do with this topic. Um, so while you guys are answering that question in the chat there, we’ll go through some housekeeping. So this session is being recorded and it will be made available shortly afterwards for anyone who’s wanting to revisit the content.

We might use clips from it on our website and on our social channels. So, so you are aware. Your cameras and microphones are off by default, so you will not be appearing on the recording. Uh, so don’t worry about that. Uh, but the rules are very simple when it comes to, uh, participating in this conversation.

Um, so we’ll be, uh, answering a bunch of questions that we’ve prepared in advance for, uh, just to cover some basic topics that everybody’s going to be benefiting from, uh, but we’ll also have some time towards the end for Q& A. So you’re welcome to submit your questions throughout the session as they come to you, um, and we are going to do our best to cover as many as we can towards the end of the call.

[00:05:00] Okay, so without further ado, we’ve only got, uh, so much time left to cover tons of content. So let’s get started. Uh, so section one today is going to be how to identify opportunities for AI products and ensure that they’re useful and not just following the hype. Um, so this first question, uh, will go out to Andrea.

So, uh, Andrea, where are you seeing the best use cases for A. I. Today? And what do you think will be the most common use case in the future?

[00:05:25] Andrea Dulko: Yeah, I think that there are some things that I, you know, naturally good at, um, you know, analyzing vast amounts of data. So, you know, I use it a bunch in my own work for, you know, analyzing user feedback, things like that.

Um, You know, anything where there’s, you know, coding help, um, any, the, the, anything where the, the path is not totally clear, but it is a repetitive task. So, you know, one thing that, like, we think about a lot is, you know, is [00:06:00] AI really needed to solve this problem, or, you know, if it’s, if you, if you know the steps that you’re going to go through every single time, there’s no variation, you can just, you know, probably set up a, a basic, uh, Um, workflow and get to the same or a similar end result.

Um, but you know, AI is really useful when there’s a little bit of uncertainty or if the path needs to change from, you know, each time, um, based on, um, situational factors.

[00:06:26] Hannah Clark: All right. Um, Nancy, did you have anything you wanted to add to that?

[00:06:29] Dr. Nancy Li: Yeah. In the past, we have seen AI has been extremely successful and useful, including for example, number one is all the content creation.

It lets me. Our company experience by ourselves is crazy. So, um, for example, when I was on maternity leave, I have two like, uh, marketers, and then I found out one of the marketers, given I was around, he was only working two hours per day and pretending working eight hours. Then he was able to use AI to do all his eight hours job.

And of course I would come back and fire him. And then, then we downsize our team and we, we [00:07:00] double our output. And to be frank with you guys, lots of our, uh, like, uh, social media content would generate by AI or sit by AI and end up. The AI con and we’re able to go viral on LinkedIn every single week. You guys can check it out.

Just now, you know, our social media marketing, uh, secret. So definitely it’s working extremely well. And we also have seen some more ML, more traditional type use cases. For example, the. Rhealogy, image recognition, they have been implementing AI, found it very successful, and also in the manufacturing space.

They have been using AI for predictive maintenance, and in the finance, they use AI for fraud detections, and those are really great use cases. Um, regarding the future where AI is going, I really think that those AI agents, personal assistants, those kind of AI agent definitely is going to take off. And like, iPhone just announced they have those new iPhones.

Everyone, almost all big tech companies introduced new concepts. I think it was going to change people’s lives. Oh, [00:08:00]

[00:08:00] Hannah Clark: I think it’s already changing people’s lives. I’m very aligned with you on that one. So I call this next question to AI or not to AI. And I think this is sort of on everybody’s mind in some way or other.

So let’s say you have a challenge, a hand or a problem area within your product. How do you determine whether building and delivering an AI solution is the right path as opposed to building a more traditional software solution? So Praveen, we’ll start off with you to take this one on.

[00:08:23] Praveen Gujar: Awesome. Yeah, I think that’s a great question.

So I think every product manager should think about this before Uh building a solution in fact like every developer everyone who basically builds a product a designer or anyone else should think about this question Right, I think maybe three things to basically keep in mind Once we are answering this question number one, what is the problem at hand and the complicity at hand as well?

There is no point basically in solving a problem by throwing AI at it, right? I think, uh, Andrea and Dr. Nancy basically highlighted the same as well. Look at the complexity of the problem. It can be solved by simple rule based approaches. We should be [00:09:00] able to do that. Um, so that determines whether you should basically be leveraging AI or not to solve the problem.

So the problem complexity is number one. Number two is data, uh, availability and hygiene or quality of the data. Also, I think it’s a common knowledge. Uh, the better the model performances is the better the quality of the data and also the quantity of the data, um, available to it as well. So, uh, it’s equally important, the quality, right?

Because you want to reduce bias in the model output. You want to make sure that it’s a fair and transparent towards the end users as well. So quality of the data is important. So if you have quantity and quality of the data, then there is basically a viable path for you to apply AI. Otherwise, you can’t force with AI to solve a particular problem.

Uh, last but not the least, uh, AI in many, many cases should be a means to solve a problem or a product and not a product by itself, unless you’re basically open AI and selling, uh, LLMs, that’s not your product. You should be applying it to, uh, [00:10:00] solve a problem, um, or, uh, build a product. I think those are three key things to keep in mind, uh, when to say AI or not to AI.

Um, so again, problem complexity, data quality, uh, and quantity as well as, uh, uh, What is the problem at hand and solve the problem and not the, uh, not the, not AI is not the problem on a product

[00:10:20] Hannah Clark: really concisely answered. I appreciate that, uh, Nancy. I know you have a bit of a framework for determining, um, what the best pathway is.

Can you share that with us?

[00:10:28] Dr. Nancy Li: Um, yes, and actually the framework we’re going to share with you guys has been used by our student insider AI PM book him. We’re able to launch eight AI product was in 2 months by using this framework. It’s very effective. So let me share with you guys how it works. So when we think about when to use AI, how to use AI really driving successful AI product, I call this, there is a head, the tail and two circles, the framework.

Okay. So starting from the, the head part, which is any time when we create any kind of AI product, when you think about [00:11:00] the pro strategies, what the purpose of you creating those AI product is growing the market share, which is For the purpose of changing the world, such as, like, Yama’s trying to, like, do a loss of self driving car with sending men to the moon.

Right? So different versions of you. What’s the purpose of you creating such products? So you need to use the framework. We call the Gucci framework to create a price strategy. That’s got caught ahead. Now, the two circles, the two circles, the most important circles we invented is that. The A. I. Hypothesis circle and lots of time any of the potential use cases, for example, you might use put a I in the microphone or using a I to optimize this panel.

There’s many great ideas, but there’s a I. Hypothesis. You need to implement validate quite similar to what pervin said regarding a data strategies, but therefore element when to eat look into the process is circle. So circle number one is. Hypothesis. I assume AI is able to [00:12:00] help us organize a panel better or maybe AI host or maybe maybe AI transcription.

Just think about what AI can do. AI hypothesis. You assume AI can do it. Now, second part is you have data strategy, which is I resonate significantly with both of them. Probably said earlier. So the quality of data really decide how good your model is. So that’s where the data strategy coming from. Where did you find the data?

We train the data. Do you like? How do you clean those data? How do you really control data quality is there? And then you build the A. I. P. O. C. That proof of concept mini A. I. And see if it actually can optimize this panel better as example, and then you look at the 4th element, which validate the input and output with customers, which is.

It is, let’s say, I organize a panel better than you validate with the customers. Is this the desired outcome you’re looking for or not? Similar to the vertical farmers. Farming’s I talked about earlier and the output from [00:13:00] vertical farming using AI doesn’t knit what the customers design output either. So therefore they should have start, like stop there right away.

Right? So this is a loop to validate the AI hypothesis. It can be, I really help us create a great product or not. So once you validate this, we go to the second loop. The second loop is actually the traditional part of management methodology, which you build your product MEP. Because the product side is more than AI.

That has like ui ux, like AI is working, but what about the things around? It could be the ai UX experience is part of the MVP part, right? Then you build your own MVP for your own product using the traditional product management methodology and continue to talk to customers and also like discover and validate additional AI features with your customers.

Getting feedback. This is a traditional loop, second loop. Now the tail, where the tail coming from is a. Three element, uh, for the tail. That’s where we, we need to think about the pro market fit for your AI product, which [00:14:00] coming from three things, number one, scalability and the generalization strategy, because maybe we customize the AI solution just for today’s panel, but how can you scale and also generalize your AI strategies when you think about this?

Number one, and I’ll scale and dominant the market. Which means it’s time to really outbeat your competition and dominate the entire, maybe the takeover zoo. I don’t know what it looks like. Just use it as a very silly example. Uh, and then finally with the third part of the tell, which is exploits your data advantage, because looping back to.

What we said earlier, the data quality data strategies really define how good your data, your model AI result is. So, so now once you have lots of users started in the scalability process, use your data and your model to have additional data. So how to really extract the best out of a customer usage, and then fine tune your data model.

Make an AI product very successful. So [00:15:00] this is a framework, right? Invented is very effective. So I recommend everyone just give a try and think strategically regarding your AI product. Wow. Let me know if anyone have any questions. I am going through this very quickly. Yeah.

[00:15:14] Hannah Clark: Yeah. If anyone has any questions on that framework, please feel free to submit them to the Q and a, I think we’ve already got a couple popping up.

Um, so we’ll move on to the next section, uh, which is going to be titled what’s different about PMing and AI products. So we’re going to be sharing some top tips and some things to avoid. Um, so definitely listen up to this section. Uh, so the next one is going to be going out to Praveen. What are the biggest challenges that set AI product management apart from traditional software product management.

[00:15:40] Praveen Gujar: Awesome. It’s a great question. Um, I think, uh, AI landscape is evolving very rapidly. And with that, I think product managers have to evolve as well. So you will see that most of my answers will be themed around three key areas, right? So let’s start number one. I think we already test upon that, whether to AI or not to AI, right?

I think it’s a good decision making, uh, thing that a [00:16:00] product manager has to apply. Um, I think Andrea already pointed out, uh, That, uh, in earlier days, we used to talk about, Hey, we solve this problem through AI. No longer you can get out from such statements unless you’re basically already doing it or at the same time A running an AI model be it a foundational model or even a SLM is expensive So you cannot just throw AI at every Problem.

So, uh, making that decision, uh, is a very critical aspect. That’s number one. Now that, that, uh, that mindset change, uh, you have to bring in a second one, uh, agility. Um, I think, uh, uh, everyone who basically builds products, uh, kind of resonates with agility as a key thing, right? You need to, your market changes, your problem statement changes.

Sometimes you need to be flexible enough to adapt to it, but more so in the case of, uh, uh, any AI product manager as well. These models are. Constantly evolving like you hear open the eye coming up with the foundational models every Uh month per se. Um, so I [00:17:00] think how do we adapt into this? Uh, really really fast paced evolving world.

Um is a key important thing as well The product manager has to be really agile. That’s point number two Uh point number uh three basically is around Um, how to basically, uh, keep a tab on continuously evolution as like the continuous evolution is the theme there. Um, product, uh, like are the models basically needs to be constantly trained and fine tuned because your business dynamics changes, uh, your traffic, uh, changes your member, if traffic quality changes, everything changes.

And that needs basically models to be trained, retrained and fine tuned on a constant basis. So as a PM basically adapt to that world of, um, experimenting and fine tuning the model and updating the model, um, on a regular basis is a key thing that, uh, that’s a mind change that we should bring in. So again, uh, to highlight three key things, whether to AI or not to AI, that’s one decision making, uh, being agile and then really get [00:18:00] to a world of, uh, continuous, uh, model updates.

[00:18:04] Hannah Clark: Thank you so much for the detailed answer. Um, Nancy, did you have anything to add before we move on?

[00:18:09] Dr. Nancy Li: Yeah, um, in addition to what Praveen said, almost he spoke all my words, but adding to what he said. So, I, uh, another thing I want to add to it is the AI ecosystems. And the second part is economics and decision making process.

And then third is the technical decision making process with all the AI, uh, AI prime managers. So let me go through what I mean by ecosystems. Um, right now in the AI space, as we talk about, it’s evolving very quickly and not only the LLM space and also. Or other partners in the entire ecosystem. For example, cloud, Andrew, you work for Google cloud.

So Google has a vertex AI, Google cloud, uh, and Amazon has back rock and then back rock that when they launch a product, they go with clouds. So when you go to back ride, they already have cropped in install for you. So the entire ecosystem, winning [00:19:00] on staff, of course, and media constantly, like. Issuing new chips and price going up, right?

All of those. So I understand the entire ecosystem is what primary AI prime manager need to be, uh, very on top so that you know, not only create the best training model, you know, what else the entire ecosystem out there, for example, M L ops. And it’s also like something people need to consider. And, uh, To go above and beyond with just one piece of the AI product.

So, so that you’re becoming the industry expert with well rounded information and also knowledge in the space. Now, second part is also the economy and return investment decision making. As I use the vertical farming example said earlier. Nowadays, lots of time when we deploy any AI product when you consider, like, balance between, for example, the cost of using API integrations.

Right. You can, you can pay all the API for charge BT or any other, um, [00:20:00] like a large language model out there. There’s a cost to it. And you can also think about, well, what if, if the cost is too high, why don’t we use open source models? So for example, Lama has it. Yes, you can use Lama, but you need consider cost of GPU.

So there’s a lots of different kind of thinking. Evolved was a return on investment when, like, using and defining your AI product. And do you do everything in house? Do you do everything like, um, using other people’s existing model? And if you use other people’s existing model, would you consider safety and also securities?

Um, as we know, lots of enterprise customers, if you want to leverage existing larger language model and then create, let’s say, chat bot, uh, chat bot or co pilot for your own company, then this means that you, you need to give away your own internal data. Now there’s safety coming up. So we need to be able to think about everything and return investment, which model to select, how is impacting the API.[00:21:00]

And when stakeholders involvement, so that’s what I mean. And then finally, it’s also decision making, technical decision making in terms of why we go after this model versus the other model, and not just a high level of this is better, but better for what I can give you specific examples later on. But is a technical decision making is also something we need to pay attention to as a AI PM consider.

Compared with traditional PM. Wow.

[00:21:27] Hannah Clark: Yeah, very great answers, guys. Um, okay, so we’ll move on to some common mistakes. I think that we’re all a little bit curious about some of the things to avoid in the space. So, uh, Andrea, this one goes out to you. What are some common mistakes that PMs make when managing AI products and how can they be avoided?

[00:21:42] Andrea Dulko: Yeah, so I think, you know, one is kind of underestimating the speed of change and the speed of evolution in the industry. Um, you know, so, so honing in, uh, too much on a particular technology or a particular design pattern that might be kind of the hot thing at the [00:22:00] moment. Um, you know, you really want to base.

Um, products on, you know, user problems and solving that problem and not so much on, um, you know, saying that you’re using a particular particular model or particular, um, products, um, in the development of it. The other thing that I’d say is, um, you know, it’s very important to understand your audience. You know, we’re working in this space every day and, you know, I think sometimes, um, You know, the level right now, at least with a the level of sophistication of users kind of varies very greatly.

So, you know, things that we might be talking about here that seem like, you know, they’re just part of our everyday language. Now, it might still be, you know, very, very new to people. So really understanding your audience, um, when you’re, when you’re building AI products and, you know, understanding like the terminology that they’re familiar with and things like that.[00:23:00]

Um, I’ll just give an example. You know, I kind of give like this intro to LLMs, uh, talk pretty often. And, um, You know, I always think like, oh, you know, everyone probably knows this by now, like, well, this was like a very, you know, probably interesting topic last year, probably like, you know, everyone there is already up to speed on this and you find that that’s not really the case, you know, I think where you have to, like, make sure that you’re, you know, getting out of the bubble a little bit and, um, you know, really thinking about, you know, what’s the, the, um, technical sophistication of the, the audience that you’re going after.

[00:23:32] Hannah Clark: Yeah, very good point. Yeah, I always have to be mindful of who you’re speaking to. Um, Praveen, do you want to add anything to that?

[00:23:40] Praveen Gujar: Um, yeah, I think there’s a great insight that Andrea gave. Um, just to add a little bit more details, uh, uh, uh, more to it as well. I think William published a paper on this recently in IEEE Engineering Management Review.

I think number one, basically, is I think going back to the first point that I actually made a while back, [00:24:00] um, is basically not build a novelty product. I just sprinkled it on top of a, uh, solution, but to really focus on, uh, an AI integrated solution where you are really enabling AI to do the core task of automating certain things or optimizing, for example, or even, uh, Minimizing the human effort that is actually required for repetitive tasks.

I think Andrea also pointed out to that earlier in the process. That’s one key thing that you can actually do. The second one basically is on the not to neglect the core infrastructure needed to run the AI model. So I think depending upon Uh, the, uh, the firm that you work at, or if you’re a startup, or if you are basically, uh, a well established, uh, large scale company, nonetheless, you need to have a core infrastructure, uh, to basically enable you to run AI models effectively, leverage the data, Um, as well, I think that’s very key, uh, piece of the puzzle.[00:25:00]

Um, and, uh, I think, um, uh, last point was very similar to what Andrea made as well, but, uh, broadening it up a little bit, the key stakeholders, engagement and trial, like explaining what the output is, for example, is very important. It is equally important for the, um, and the users are member also. Yeah.

Transparency may not be the biggest thing right now. But we expect it to be a predominant, uh, ask that our members, our key stakeholders, our partners, vendors, everyone will ask for having the transparency, um, and just enabling the transparency is not an easy thing. You need to have the backend systems.

You need to have complete understanding about how AI is trained, what data is used and everything that goes into the output that AI generates, um, that’s key thing. So really having that sense right in from the beginning is very important.

[00:25:51] Hannah Clark: We’ll move on to, um, some, uh, questions around the evolution speed of change, which we kind of talked on a little bit earlier.

Um, so AI products quickly evolve as new [00:26:00] data comes in. How do you manage your product roadmaps and timelines when things can be changing seemingly by the hour? Um, I’ll let anyone take a crack at this, uh, but, uh, I think Andrea kind of alluded to this earlier if you wanted to lead off on it.

[00:26:13] Andrea Dulko: Yeah, I think, you know, um, anticipating that that change is going to be coming very rapidly for the foreseeable future and not, um, you know, not, um, like understanding that you’re not going to be looking two years out anymore in terms of having the agility and flexibility to revisit your road map as new information comes in is super important.

Um, and again, you know, making sure that, you know, maybe your implementation details may change, but like the core, um, problem that you’re trying to solve in the, the, The kind of like product plan, um, you know, isn’t isn’t so impacted by by the changes in the industry. Maybe just some some details of how you go about it.

Did anybody want to add anything?

[00:26:58] Praveen Gujar: Yeah, sure. I can add. I think [00:27:00] maybe another side to it also, right? I was listening into a podcast where Perplexity CEO talked about this and just elaborating some of his insights also and what I see on a day to day basis. So I think when you build a solutions and, um, product based experiences, I think just to keep it paced with evolving speed of the AI, you should build your systems and products in such a way that you should be able to plug in and plug out any different model, um, and, uh, uh, Uh, derive the value proposition out from it to extend it to your users and members as well.

So what do we mean by that? Right? Like today, a llama three, uh, is being used in your product and solutions to build something and, uh, uh, send the experience out to the customers. There is a better model that comes up that does the same job at a cheaper cost, for example, as I better throughput and also better, uh, better output out of it.

You should be able to plug and [00:28:00] play different models to, um, um, to leverage the, the solution that the models bring out and also, um, have the value proposition transferred to your users. I think, how do you design your systems and backend infrastructure to enable that is a critical piece of the puzzle.

[00:28:16] Hannah Clark: Yeah, I’d agree. Um, before we move on, was there anything you wanted to add, Nancy?

[00:28:21] Dr. Nancy Li: I totally agree. And in terms of the architecture design and also want to emphasize this is different from traditional product management, AI or any PM architecture, software architecture you have seen in the past. In this case, a lot of time we leverage is 16 model.

And as well. Provence that is very important when you design an architecture. Make sure that is easy to be able to swap out different kind of models. If you choose to leverage existing models and also the other part is, uh, make sure to. Directly pick a lane, uh, early. For example, if you already have all your G Suite, you’re [00:29:00] married to Google.

It’s more likely to use Gemini and, and also depends on the end users. If your end users today, they already use all the G Suite for the enterprise customers, uh, Google workplace, and to do all different kinds of like, uh, operations, maybe it’s more likely your AI solutions will easier to integrate with Gemini compared with like.

Maybe chatty or or Google’s competitor in. Oh, there’s Microsoft as well. So, um, think more regarding more into an ecosystem perspective is going to help us to think long run. But in general, I know, um, enterprise companies they like. Microsoft copilot more, they felt it’s more secure compared with just plug in.

There’s also a little bit industry brand and reputation over there. So, therefore, think long term strategically and bigger picture ahead of time. But I do agree. The architecture is very critical.

[00:29:55] Hannah Clark: Yeah, that’s very interesting strategy. I appreciate that. Um, okay, so [00:30:00] we’ll move on to barriers. So one of the first barriers in adopting AI is ensuring fairness, ethics, and transparency.

Um, so I, this is a big topic right now, and I think one that doesn’t get enough, uh, airtime. Um, so, and especially when we’re talking about, you know, complex data sets and customer privacy and all those kinds of things. So, uh, Praveen, how do you approach this in your own work?

[00:30:21] Praveen Gujar: Yeah, that’s an awesome question.

Um, I think, uh, let’s start by saying that every organization is probably learning, uh, in this, um, I think we are at different stages of the journey. Uh, so as you pointed out, uh, transparency, um, uh, privacy, uh, and basically, um, like reducing bias are the three key things that you need to keep in mind as a product manager.

So let’s go one by one. Uh, let’s talk about bias first, right? Um, To minimize bias, how do you use the data and how do you source the data or what is the understanding of the data that you basically have and how do you use different techniques like maybe a RAD technique to basically minimize biases or hallucination in your [00:31:00] model is very important as well.

This is where the, uh, the quality of the data that I talked about earlier is a very critical piece of it. Um, um, I think, uh, uh, goes a long way in reducing the bias. Uh, That they are model outputs, uh, at this point. Number two, uh, talking about, uh, uh, privacy, uh, you need to apply, uh, multiple, uh, uh, techniques including differential privacy, for example, um, to minimize, uh, uh, privacy related issues.

Uh, in your data, uh, you should be fully aware the the backend systems and capabilities that you actually build should be fully aware of how the PII or personal identifying information is used. How do they flow through in your ecosystem and how do they influence your model output? Has to be understood and for that basically you need data systems and capabilities to make you understand those So it’s a very hairy topic to be honest and not for a small size company But that’s why I said like it’s in it’s in every organization is in different stage But last but not the least, [00:32:00] transparency, I already talked about that, um, a little bit, uh, it’s very important to basically generate, uh, uh, provide transparency, both to the users and, uh, stakeholders, including partners and vendors, and how model, um, what model is being used, uh, how the data is being, uh, what data is being sourced, how it is being trained to you in these models, and, uh, for end users, say, for model output, whether it’s a creative that, uh, Dr.

La, uh, They talked about in the past, um, watermarking it with the AI generated creatives, for example, is critical to have the transparency so that you are bringing in your customers or your members along the, uh, the route and help them make informed decisions. So again, it’s important to address these issues.

That’s bias, transparency, and privacy.

[00:32:50] Hannah Clark: Fabulous. Yeah. Um, so, uh, Andrea, actually, if this is actually a really interesting question for you, I’m very curious to see if you’ve got some specific insights [00:33:00] on ethics and transparency in AI.

[00:33:02] Andrea Dulko: Yeah, I think Praveen covered it really well. But just, you know, as a developer, being very clear with your users, you know, about how their data is being used, having very easy access to, you know, the full term terms of service.

Um, Um, you know, as an end user, being mindful of those things and making sure that, you know, those things are available, um, another thing that was mentioned was, you know, as a developer being transparent about the sources. So, like, you know, any, any, um, any time that you can kind of, um, ground AI responses and specific data sources to help, um, validate the accuracy, um, is super helpful.

Um, and then as a. Business, if you’re adopting an AI solution, being very mindful of, um, the, the companies, um, you know, data, uh, data use and privacy and making sure that they’re, you know, a reputable, um, you know, reputable company with, um, [00:34:00] with, um, you know, a high bar for, for privacy and security.

[00:34:07] Hannah Clark: Okay, that’s great.

I’m glad we were able to cover that because I think it’s a really important topic to cover before we move on to, uh, our next section and our last section before we head into Q and a. Um, so for folks who are interested in transitioning to a more of a specialized AI role, this is for you. We’re going to be talking about translating experience to a strong resume and finding an AI driven role.

Um, so we’ll start off with, uh, this question’s for Praveen. What technical experience do you actually need to break into the AI product management space?

[00:34:36] Praveen Gujar: Yeah, this is a great question. Um, so I think, uh, whenever I mentor a mentor, especially people who are in the early stages of their professional journey, um, I talk about two things.

Um, if you are a developer, you need to have a product mindset to be a successful developer as well. You cannot just sit in a dark room and code and be successful at it. Uh, not in the longterm. [00:35:00] In a similar way, if you are a product manager, you need to have a developer mindset. We need to understand how the systems are built, how they are architected, and what are the pinpoints that they go through, uh, developers go through as well.

And for you to be a successful product manager, you, uh, in the long term, you need to be in the debates. It’s like the founder mindset that we talk about. Um, so, uh, with that as a, uh, a preamble, three things is very important, uh, well, I think, uh, uh, number one, building foundational knowledge on the AI. Do not, please don’t just learn AI as a term, but really understand what AI is, what it should be used for, what are the escape points.

Core capabilities that basic data enables. How are it architected? What are the different ways? What are the different AI models that are available? What is the difference between an AI and ML? All these things are something that you can only understand when you deep dive into it. So having a solid foundational understanding goes a long, long way in building great products, engaging with customers, engaging with your developers.

It’s an awesome way [00:36:00] to basically kickstart your career. Um, the second one basically is, uh, um, education on data, uh, is very important. Uh, it’s like the new oil or whatever it’s called right now. Um, understanding the data and understanding how the data should be leveraged to train your models.

Understanding the limitations of the data is equally important as well. Being technically proficient in querying data. Um, in understanding and interpreting the data is a very, very foundational skills for you to be successful as an AI PM. I often see PMs unable to query a simple SQL listing and query data.

And you get a lot of pushback from developers saying that, Hey, why can’t PM do this job, for example, right? Uh, it not only builds a good rapport with your developers, but it also makes you a very self sufficient PM as well. Last but not the least, I think ecosystem understanding. I think Dr. Lee already talked about that in the past.

I think understanding the ecosystem is [00:37:00] equally important. What is happening in the area? What are the evolving trends? And again, don’t just understand for the namesake of understanding it. Go deeper into it. Uh, play on with models, build your own, uh, experience around it. A successful PM is who can understand and build their own product is what I would say, especially when you are in the early stage of your, uh, of your professional journey.

[00:37:26] Hannah Clark: Great career advice there. Um, in the name of time, since we have lots of, uh, questions from audience members, and we want to make sure we get through this content. I think we’ll just be sticking to one owner per question for the rest of this section. So this next question will be going up to Nancy. What specific AI related skills or tools do you recommend that PMs highlight on their resume or learn now to make themselves more competitive in the AI job market?

[00:37:50] Dr. Nancy Li: Great question. And actually this list things I recommend I want to put on your resume and all the things you need to start learning right away. Okay. So there are like [00:38:00] 10 different kind of AI keywords or knowledge you need to learn. For example, you can start from learning like the AI agent, conversational agent with a rack with a vector database, what is like ml ops and all those are like fundamental of AI technical terms.

And, and those terms that actually is used in your future job. A lot. Of course, you also need to learn the basics of, uh, what is like deep learning, machine learning. Of course, this is, this is the same, but additional, those kinds of technical terms. I want you guys to start learning. There’s a checklist. Uh, I have a YouTube video top of the checklist, top 10 checklist.

You guys should like, I can share with you later on, but those checklists make sure you’d learn all those important AI, basic fundamental knowledge and the tools. So now let’s talk about the tools over there. So I really like Vertex AI. By Google, and actually, I use it myself. It’s it’s great. And so myself actually launched the machine vision product 8 years ago that will help you see these reduce car crashes.

[00:39:00] I received the mayor’s best practice award when a builder only leveraged and media. Um, a model like eight years ago. Now I, I just explore again, figure out actually a Vertex AI is one of the best tool set out there. I want everyone to go check it out and you don’t need to know how to code to really play with it.

It was designed in a way that they have lots of existing tools and models you can leverage. And I just found out I was able to train the same kind of like a machine vision model. Just using Virtus. ai and leverage with some help from charge BT to generate some code, being able to train my own AI model within three hours on Saturday night, right?

Something like that. You guys can do it as well. So start again, get those hands on, um, experience right away. Um, now except those like AI tools, AI, like keywords and information must learn. I also highly recommend Opera managers also learn from engineering. Everybody needs to learn from engineering so that AI is going to give you the best outcome.

So I always see prompt [00:40:00] engineering as if they are, you can see AI as a three year old. If you ask them smart questions, they’re going to give you very smart, advanced answers, or they give you. The entry level three, a three year old answer. So therefore prompt engineering is all part managers must learn and start to learn how to prompt actually the five different ways to do prominent sharing, then you guys can literally like look into it later on.

Um, and, uh, that’s the more technical part. And the other technical part was decision making for AI prime managers in terms of skills. Um, for example, you need to decide. One model to choose and let me use a real life example, decision making, for example, in, um, we have a team inside a PM bootcamp. So their product is to turn a picture of your kitchen, um, into a generated interior design, new brand new kitchen.

So this concept sounds pretty easy on image. And as some AI to it, a new kitchen, right? It sounds easy, but actually very hard to build because our team experienced the [00:41:00] challenges in terms of how to do prompt engineering was existing model, which models to choose, for example, to do those image generation model that currently has three models, Dolly.

Mid journey stable, stable diffusion, right? So when we choose those three models, it’s not just like theoretically on the paper choosing model, you really get your hands dirty. When we start to build our real life AI product with software developers. We. Found out that Dolly, they don’t have those like easy API integration with our external website or like apps would be for our users.

And it’s very hard to really like take things outside of Dolly. So therefore not good API cut it off. Now, MidJourney, they have API, they’re, they’re able to create amazing pictures for your kitchen. However, you’re not able to adjust the temperature means creativity of those AI model. Whenever you use, you use MidJourney to generate a new kitchen, they always put a [00:42:00] human in it, they always put a model in it because it looks nicer.

And then you’re like, Oh, how can I adjust the creativities? You cannot adjust the temperature, quote, creativities in the kitchen. Uh, mid journey. And then next part we’ll finally go, uh, we’ll finally land eventually, uh, stable diffusion that was able to adjust creativity, good API integration, right? So those are what I mean by decision making and get your hands dirty.

Never, never try to do theoretical study. You have seen lots of people out there just go out for interviews saying that the build some like a chatbot, everyone can build chatbot nowadays, but when you go out for interviews, people ask you real life challenges, not just, Oh, you, I do API integration with, uh, with, uh, like chatGPT.

So that I do chatbot. It’s not that because your interviewer is going to ask you questions regarding how would you deal with data drifting? Oh, how do you build a data pipeline? So once data drift, how would you monitor it? What’s the frequency? Well, what would you do like [00:43:00] those real life? Um, decision making hands on experience is what the hybrid manager is looking for.

So therefore, I recommend everyone who is involved in the decision making of your AI product. Get your hands dirty, but doesn’t mean they have to code, but you need to be part of the thinking process. Oh, data drift and start to hallucinate again. Um, What do we do? I’ll try this and this. That’s a very, very, um, like detailed perspective that you’re able to back up your experience in the AI space.

Um, that’s, that’s in general what I want people to do. And finally, it’s also more, um, soft skills is AI evangelism, cross functional collaboration. Um, Praveen mentioned earlier, there’s a privacy issue, ethics issue. That’s where the cross functional collaboration coming in and dealing with. Privacy and convince your stakeholders to adopt it as well.

Uh, just in a high level, what, what I think AIPM need to master technical and people soft skills.

[00:43:57] Hannah Clark: Lots to parse there. Thank you very much, Nancy. [00:44:00] So we’ve got about 15 minutes left on our session. So that means we’re going to be getting into the Q& A right away. Before we do that, I know that there’s some folks that might have to start peeling away for their next meeting.

And if that’s you, I just want to say thank you so much for making the time today to join our session. We really appreciate your participation. And if you are loving this, we would like to see you at our next event, which will be on how to, um, actually. We’ve got, we’ve got an event planned. We haven’t got the name quite settled in, but we’ll be sending out emails that, uh, in our next newsletter send.

Uh, so our station is not live yet, but we will be having these events on an ongoing basis around this time towards the end of the month. Uh, so stay tuned for the next one. Um, and we’ll move right into the Q and a, uh, so I’ll send this first one to Andrea. This is from Samir. I’m finding it hard to cut through so much AI noise.

What do product managers need to focus on to get started and are there some resources you can recommend to help cover breadth of technologies and good sources for deep diving?

[00:44:57] Andrea Dulko: Yeah, this is a great question. I [00:45:00] think there’s So what I do is I actually do set aside time each day, like at least an hour or two to, you know, catch up on news.

Like, so there are several different, um, you know, feeds that, that I, that I look at. Um, so I think, you know, I think it’s very important that, um, because the space is so competitive, that it’s not just, you know, It’s almost like it’s not just your day job anymore. It’s like this, it needs to be something that like you’re very interested in, that you get very excited about.

Um, and that, you know, you, you, you actually want to, to learn more in your free time. Um, so, you know, in, in addition to just like Twitter and LinkedIn, there’s like a great newsletter that I, that I read every day called the neuron. Um, so things like that, but really setting aside time to actually, you know, and not, not just like, you know, read the articles, but actually use these new products that are coming out.

Um, And, you know, get, get the hands on experience.

[00:45:57] Hannah Clark: That’s for that one. Uh, we have a few more to go. [00:46:00] Uh, so I’m going to be kind of speed rounding these, although I just got word that our next session will be on how to use AI to supercharge product led growth. So I wanted to throw that out there because it sounds like a really exciting topic. Uh, so keep an eye out for registration on that.

And we’ll move on to our next question, which is from Eric. Are there any best practices in managing the development of ML models within a safe or scrum front framework where sprints are not compatible with AI or ML development and delivery? Um, does anyone want to field that one? Does anyone got a answer off the top?

[00:46:29] Praveen Gujar: Uh, sorry, just the last part of the question. I didn’t catch the last part of the question.

[00:46:34] Hannah Clark: I’ll just repeat it, maybe slower. Um, are there any best practices in managing the development of ML models within a safe or scrum framework where sprints are not compatible with AI and ML development and delivery?

[00:46:47] Praveen Gujar: Yeah, um, I think like, uh, um, the one recommendation I actually have would be, uh, not really box yourself in a sprint kind of a model or a scrum kind of a model, but really rely more on experimentation, um, [00:47:00] uh, that basically can enable you to constantly iterate on your models and then basically see what is the particular flavor of my model or version of model that actually can really deliver the value you want to deliver through your product or solution.

So, uh, maybe a different mindset here. This goes back to a question like what are the different ways of thinking from an AI product manager than traditionally boxing yourself as a traditional PM. Here is more iteration driven rather than boxing yourself into a 15 day scrum or a product, or a story schedule as well.

[00:47:36] Hannah Clark: Right. Thank you for responding. Uh, so this next question is from funny, which tools are used by Nancy’s team to build eight AI tools in such a short time. Nancy, did you want to take this one?

[00:47:45] Dr. Nancy Li: Yes, we use a lots of tools. And for example, for there’s, again, it’s a, it’s a framework. Let me show you guys, uh, what kind of tools for each set of framework.

And when we start with, we are ready. Actually, we start from the top, we direct think about what [00:48:00] kind of traditional, uh, Uh, existing AI ML model we’re able to use. So we actually explore all possible examples of the existing models. And I use the, the ones, say the open, the kitchen renovation examples I showed you earlier.

There’s so many existing models. So number one thing we actually explore all possible models based on the use cases we discussed earlier. And then next part, we also explored and lots of no code tools. There are like refill and the bubbles, those kinds of no code tools. So that our, like, uh, prime managers is able to solve problems hand by hand by our software engineers.

We still have software engineering developing the AI model, but our prime manager was like, Oh, I wonder just like UI, whatever doesn’t work. So they are able to use no code tools to like go hand to hand together to play with it, uh, immediately with a software developer so that you can have a better communication and get your development process much faster.

And also [00:49:00] do experiment. As I mentioned, AI hypothesis is very big part of creating a best product. So you can use quickly, use some low, low code or no code tools to do some experiment and check it out as well. Yeah. And. Third, we also leverage a lot of existing cloud solutions. And as we mentioned, we use lots of Vertex AI and this is great by the way.

I’m doing free commercial for Google today. Uh, and also for, uh, uh, Azure, they also have their cloud platforms and we found out that Azure gave away lots more free cloud storage compared with Google as well. And later on, we started to push our student to use more free resources on Azure as well. So those are, um, all amazing resources you need to look into.

And we’ll also use tensorflow. Basically, they are just check out all the ecosystems were able to tap into anything that’s available on the market. I would test out this. Best for the specific solutions we use. And then [00:50:00] we create the product extremely fast. And actually some of our students products already have early adopter.

They have their own website. People can use it. Um, several students also like in the process, talking to the VCs to have funding to their own product right now. Uh, nowadays AI is really changing how product can be developed. You can do really fast. We did a product within two months. You can do it as well.

You make sure to follow the framework step by step. Yeah, you can leverage it for sure.

[00:50:27] Hannah Clark: Awesome. Thank you for that. Um, this next question is actually for Andrea. It’s from Phil. Phil’s wondering how do you use AI to make sense of historical unstructured data to provide tangible results and value for clients?

[00:50:45] Andrea Dulko: So I think when I saw this come through in the chat, I think it was in, in, The context of maybe dialogue flow or something like that, or of historical conversation data. Um, so there are many tools out there. Uh, just, just a few examples off the top of my head where, [00:51:00] you know, you can extract, um, themes or topics from that historical data.

And that could, you know, maybe, you know, maybe, maybe those were, um, conversations that came through on voice. It could be very old, you know, legacy systems. But you can kind of use that as a signal, um, you know, to figure out what, you You know what? You know, some percentage of those cases that maybe could be covered by, you know, by a bot or an agent.

Um, so that’s, you know, that’s one thing, kind of using a I tools to uncover topics, themes, um, within that historical data and then translating that into, um, you know, how, how can you automate some of those, um, recurring, recurring topics?

[00:51:45] Hannah Clark: Thanks for answering that. I really appreciate that. Phil, I hope you’re still with us to get that answer. Um, so this next question is from Michael. Um, so when at the point of creating an AI solution, are you basically taking a person that’s [00:52:00] currently doing the work and then modeling the AI to simulate the decision making of that person from what inputs they get and how they compute that information to give their output?

Uh, I think I’m following this here. Uh, if so, are you creating a branch decision model, getting into the micro details and nuances in their decision making? Um, does anyone want to take that? There’s, it’s kind of a dense question. I’m sorry, Michael. I’m just trying to make sure that we can give you the most valuable response here.

[00:52:27] Praveen Gujar: Uh, sure. Um, I think maybe I can take a stab. I think I, uh, maybe I understand the question. Um, so I think, uh, uh, so, um, traditionally, uh, certain functions might have been, uh, fully managed by, um, uh, by human beings, right? Let’s say it’s a customer support. Um, and, uh, as a customer support, you make various decisions.

You go through basically certain checklists or workflows. To fully answer the question that, uh, customer ask on your specifically are applicable in the B2B industry. So I think, uh, when you basically look [00:53:00] at to solve this problem, you want to look at what are the specific stages of the workflow that you can fully automate and given the technology or the data that you may actually have as well, and at the meantime, uh, parallelly, like how do you can actually deliver the value to the customer by automating it as well, uh, Let’s say, hypothetically, one case can be where you can completely enter and automate a first interaction by the user and then only direct to a human being if the question is not obvious that can be fit into two or three categories that can be answered through a link or something, you know, chatbot mechanism.

That may be a first approach that you want to take in solving the problem. Second, if you want to basically think through, um, uh, Like certain aspects of enabling the customer support itself, like provide AI power tools internally, so that they can actually retrieve information or answer a question to the customers when they are live and chat with them much faster than they otherwise could in their own, that [00:54:00] in their own tools.

previous ways. There’s another way to basically answer the question or solve the problem at hand here. So there are multiple ways you can think about, but really it goes back to the fundamental processes that we talked about is, uh, understanding the problem at hand, what value can actually add this thing, and then basically automating it and surely eliminating or minimizing the work needed from a human being there.

[00:54:26] Hannah Clark: Hey, it was speaking of eliminating human being. I’ve got a question here from funny. How close is a I to replacing product managers? I feel like this is a very hot button question. Does anyone have a strong opinion on this one?

[00:54:40] Dr. Nancy Li: Uh, I have something to say about this. I’ll both lead to it. It was I sound like people getting replaced.

Um, so I did investigation on this topic. I found it very, very interesting. Um, so In summary, part manager is not going to be replayed by AI soon was because the human touch, not the technical [00:55:00] part is actually building a report. Nowadays, if everybody is generated by AI, you don’t trust AI anymore. So whoever is actually still has a human touch is going to be the winner, such as like soft skills, interpersonal skills, and obviously, you know, the worst out of the AI.

Can be generated, but who you talk to makes a great difference. So in general, we’re not going to get any replace, uh, but AI. Was able to help her manager to speed up the process significantly. Um, and from the technical perspective and also prime managers need to help to watch out any kind of hallucination and also bad outcome for you.

Somebody invested different kind of tools out there. There is, um, uh, a company. And actually, I really like, um, So they, it’s an Australian company called Dovetail and their competitor is Vibery. They’re very similar. They are customer insight. So customer insight, you can like summarize [00:56:00] voice of customer interviews, uh, and getting to result and, and then, and also generate user stories and link them back to the voice of customer interviews.

Those can be done by those tools. And however, the interview itself need to be conducted by human because they want to be trusted by human inside of AI. Okay. Uh, now from the, um, The like technical decision making process. When we test our AI, we found out that sometimes you ask AI, Hey, what, how can I like define technical KPI for ABCD product, AI give you generic answers.

And some of the one third of answers is not tailored specific to the product. And even if it’s, they try to tailor to a product, it’s also, it’s still like incorrect. So, but I test all of those already. And so what I mean is, um, AI has. Some good usage, but not all the AI output is correct. And that can be the high standard of an outstanding product manager.

That’s in summary, we are not getting replaced, but they can [00:57:00] help us to do our work faster.

[00:57:02] Hannah Clark: Yeah, absolutely. I would agree. I think that we all have to keep in mind that there’s still no way that I that represents the people skills and empathy that’s required for product managers and other highly skilled profession.

So we’re still a little far away from that. But this is kind of the end of our panel today. So I wanted to thank everybody who has attended the panel today. I really want to give a warm thank you to our panelists. Praveen, Nancy, Andrea, thank you so much for your time. We know that’s very valuable. Um, for everyone in the audience, we’ve, uh, added a, uh, feedback form there.

Michael has just posted it for you folks. If you wouldn’t mind taking a moment out of your day to fill that out, it really helps us out to deliver even better sessions with you, uh, every time we do these. Um, so thank you so much for filling those out in advance and, um, I really appreciate your time today.

Have a great rest of your week and we hope everybody is successful in your AI specializations going forward. Thank you so [00:58:00] much.